POSTS

Digital Transformation

- 6 minutes read - 1186 wordsWhat is Digital Transformation and Why?

If you are interested in technology then you are pretty familiar with the word “Digital transformation”. This a buzz word going in all big enterprises to disrupters and small startups. The intent is to stay ahead of the game which I will cover in why part little later .. hang on. At its core, digital transformation is driving companies to reframe their relationships with their customers, suppliers, and employees through leveraging new technologies to engage in ways that were not possible before.

Now DT marks a radical rethinking of how an organization uses technology, people, and processes to fundamentally change business performance. People and Process(Adopting Agile is a big play here) are a key part of making it a success but that’s a conversation for some other time. Here I will focus on the technology aspect and architecture which can be used for the stepping stone of the business performance.

Now, let’s circle back to why DT ? with the lens of technology i.e. to achieve the business goals we need to have an architecture that supports the

- Faster IDEA CYCLE TIME

- Modularized SOLUTION ARCHITECTURE

- Continuous SOLUTION INTEGRATION

- Faster TESTING AND QA TIME

- Autonomous DEPLOYMENTS

The Architecture

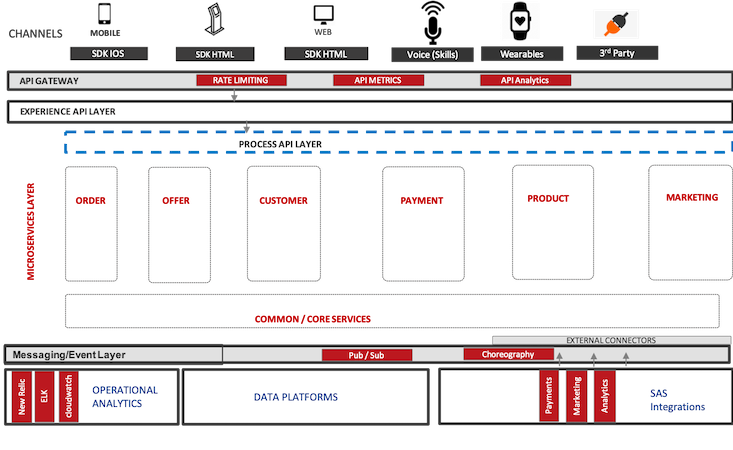

Now lets deep dive in all the logical components of this architecture.

Channels: For successful business transformation the outreach needs to be on all digital fronts. That means all the channels need to be unified and speak the same language. For this, the architecture needs to be able to meet the need of existing or any upcoming channels. While Web and Mobile have been around for some time but voice skills and wearables are catching up really fast that means all the underline components need to be ready for it. Each channel has its own need and technologies, which brings the SDK into the picture. This SDK is responsible to lay out the foundation of the integration with the underlying API world. e.g IOS and Android for mobile, while Web and Kiosk are HTML based front end technologies. SDK would have modules like networking, caching, configurations, etc.

API Gateway: Now that you have channels and its SDK, they need to be able to talk to an APIs and that’s where API gateway shines. This is the first secure entryway to your API infrastructure. As I already underlined security in the previous line, but I can’t stress enough the importance of it. Precisely for this reason API gateways come with security and rate limiting policies. Along with this API, gateways are very in capturing API metrics and gathering raw analytics. AWS API Gateway, Mule ESB are some examples.

Experience API: These will be the only public APIs in the entire layer of APIs, that all channels would directly access. They would largely be channel agnostic but will build flexibility to allow channel developers to make them channel specified in the respective SDK. This layer exists to provide an abstraction of different functions from an API client perspective, allowing for different configurable attributes for pagination, field filtration. GraphQL is a perfect fit for this use case though you can write in any language or stack.

Process API: This is an optional layer. Where complex orchestration/aggregation is required resulting in managing multiple calls to different domain APIs (System or Microservices), a Process API would be needed. If the case for a complex orchestration or aggregation doesn’t exist, Experience APIs will communicate directly with Microservices or System APIs.

Microservices: This is where access to core domain services such as Offers, Order, Profile, etc are required, the recommended way is to access through APIs exposed by microservices. Microservices should be self-contained, independently deployable, and manage the data and the business logic associated with a single bounded context. And I can say a ton about microservices alone but that’s not the point here. When you are in a large enterprise or the businesses which are still early in the journey to adopt DT, they usually end up creating System APIs. This is optional. This layer only exists to provide a micro service-like-facade on a monolith application not yet broken into microservices. The system APIs provide a microservice abstraction and help create bounded contexts in order to access functions within a monolith application, using modern architectural paradigms of single bounded context, self-contained APIs and the like. Over the course of time, they will become true microservices.

Messaging Layer: Till the above layer, we completed the business end of the transaction important to the end customer. But now each microservice is responsible to share that user information or actions to other services or downstream/legacy systems. Messaging layer is foundational for that. Pub/Sub or choreography patterns are used for this integration. The key point for this layer is to bring loose coupling/Decoupled architecture.

This layer can be used by the Experience and Process APIs as well to implement the pub/sub or choreography patterns. AWS SQS, Kinesis, Apache Kafka, RabbitMQ are some leading contenders for this layer.Data Layer: Data led transformation is the way to go. Every aspect of the user journey and touch points create huge data footprints. They need to be captured, messaged and analyzed on a regular basis so that it can be fed back to the marketing process via campaigns, etc. ML/AI are a pivotal player here to make these data related feedback to transactional systems in an automated fashion. You must have noticed how quickly your browser history in the phone used by all the e-commerce apps to upsell. That’s all data magic.

SAS Integrations: To stay ahead of the competition most of the companies use SAS based solutions for certain aspects because of their niche in that space. Factors like custom development cost, security, etc. also contribute to using SAS offerings. Identity is a very common use case for this. The microservices can create the external connections to these SAS endpoints or they can be through messaging layer based on the use case. one common scenario for messaging layer is loyalty where user information can be sent via messaging layer in a decoupled fashion.

Operation Analytics: Now all of the above could be a nightmare rather golden throb if the operation analytics is not set up. You can imagine running 50+ MS with autoscaling pods and serverless components and no monitoring on it. It can very quickly backfire. So it’s rather imperative that we have the operation analytics set up for all the components in the architecture. Log aggregation with a correlation ID, APM, and infrastructure monitoring, monitoring for cloud components are decisive to the success. Tools like ELK (ElasticSearch Logstash Kibana) stack, New Relic, App Dynamics, AWS cloud watch, Xray, etc. are few to name which has the capability to monitor, alert and take automated actions on the issues.

I have implemened this in all of my DT projects and seen a tremendous success. Now there were hiccups ofcourse but eventually proved be the difference.

I have mentioned an reference archietcure in the next part here.

Connect with Me

If you are interested for a conversation or a chat. Please reach me on my linkedin.